This 4chan Board Became a Factory for Explicit AI ‘Deepfakes.’ Nobody Is Stopping It.

Activity on 4chan's 'Adult Requests' board has nearly tripled as its users victimized untold numbers of women and girls

By Jared Holt

Content warning: This article discusses online sexual abuse and contains explicit language. Reader discretion is advised.

TLDR

Daily activity on a 4chan imageboard dedicated to exchanging sexually explicit and pornographic content has nearly tripled over the last year as its users have embraced AI-generated “deepfakes,” new analysis from Open Measures found.

Users on the board frequently exchange tools, tips, and tricks on how to coerce AI software into producing sexually explicit deepfakes, circumventing the efforts of many AI companies to prevent such use cases of their software.

The board also harbors an emerging micro-economy of users accepting commissions to discretely generate explicit deepfakes of private and public figures.

Users on the board most often request “deepfake” images of female subjects, sometimes sharing photos of subjects who appear plausibly underage. It’s unclear if the site’s moderators make efforts to verify the age of individuals pictured on the board.

Context

Open Measures has seen usage of generative AI software to perpetuate online sexual abuse increase as the technology becomes more advanced and less cost-prohibitive. Today, AI deepfakes drive an underground economy, hosting tailor-made tools and for-hire services catering to those who seek sexually explicit “deepfakes” – images and videos of people which appear to be authentic but were digitally generated.

The overwhelming majority of those victimized by nonconsensual deepfakes are female and in some cases include children, and the online communities that produce them often display extreme misogynist and male supremacist views. These ecosystems also operate with the same digital infrastructure employed in other forms of cybercrime, which means victims often face cybersecurity risks in addition to social harms.

Policymakers have spent years attempting to counter the nonconsensual distribution of explicit content, including AI deepfakes, but their efforts have severely lagged on the issue. While many countries have proposed or passed legislation criminalizing such material, the enforcement of those laws has lacked urgency and encountered legal complications.

4chan’s ‘Adult Requests’ Board is a Depot for Sexually Explicit ‘Deepfakes’

4chan, an imageboard platform where users are anonymous, created its “Adult Requests” board (also known by its URL suffix, “r”) in 2004. Though 4chan users have historically shared nonconsensual material, including hacked nude photos and face-swapped images, the Adult Requests board initially existed as a venue for users trading and discussing pornography – requesting names of specific adult actresses, finding videos that screenshots originated from, and so on.

But in recent years, Adult Requests users have become increasingly fixated on the production and trade of sexually explicit material made with generative AI software. The majority of threads found on the board today contain user-submitted photos – most often of female subjects – and requests for others to produce fake images depicting them nude or engaged in sexual acts. These requests are fulfilled by users who self-describe as “wizards” (a term that originated in online “incel” subcultures), who share the deepfakes they produce. This back-and-forth takes place in full public view; anyone who visits the board can see the images its users are swapping.

Requests for deepfake images on Adult Requests most often target female figures with some sort of public profile, including politicians, celebrities, actresses, athletes, and social media influencers. But many posts also solicited deepfakes of female subjects that users said they encountered in their personal lives: former romantic partners, coworkers, classmates, neighbors, and family members, among others.

It is unclear whether the board’s moderators make any attempts to verify the ages of individuals in photos its users submit as reference material when requesting explicit deepfake images. Many posts on Adult Requests have included pictures of people who appeared young, plausibly teenagers. (Due to privacy and legal concerns, Open Measures did not attempt to independently identify individuals pictured on the board or verify their age.)

The ‘Adult Requests’ Board Grew as AI ‘Deepfake’ Requests Dominated its Daily Activity

Open Measures decided to dive deeper into activity on Adult Requests after finding its users trading explicit AI-generated images of female athletes in the 2026 Winter Olympic Games. The tools available in our platform allowed us to view historical datasets for the board, where posts are quickly deleted to make way for new ones, and to minimize our exposure to its explicit (and potentially illegal) content.

Editor’s Note: In the interest of protecting victims’ privacy and limiting exposure to potentially illegal content, Open Measures elected to censor explicit material and hyperlinks in screenshots of the imageboard included in this article.

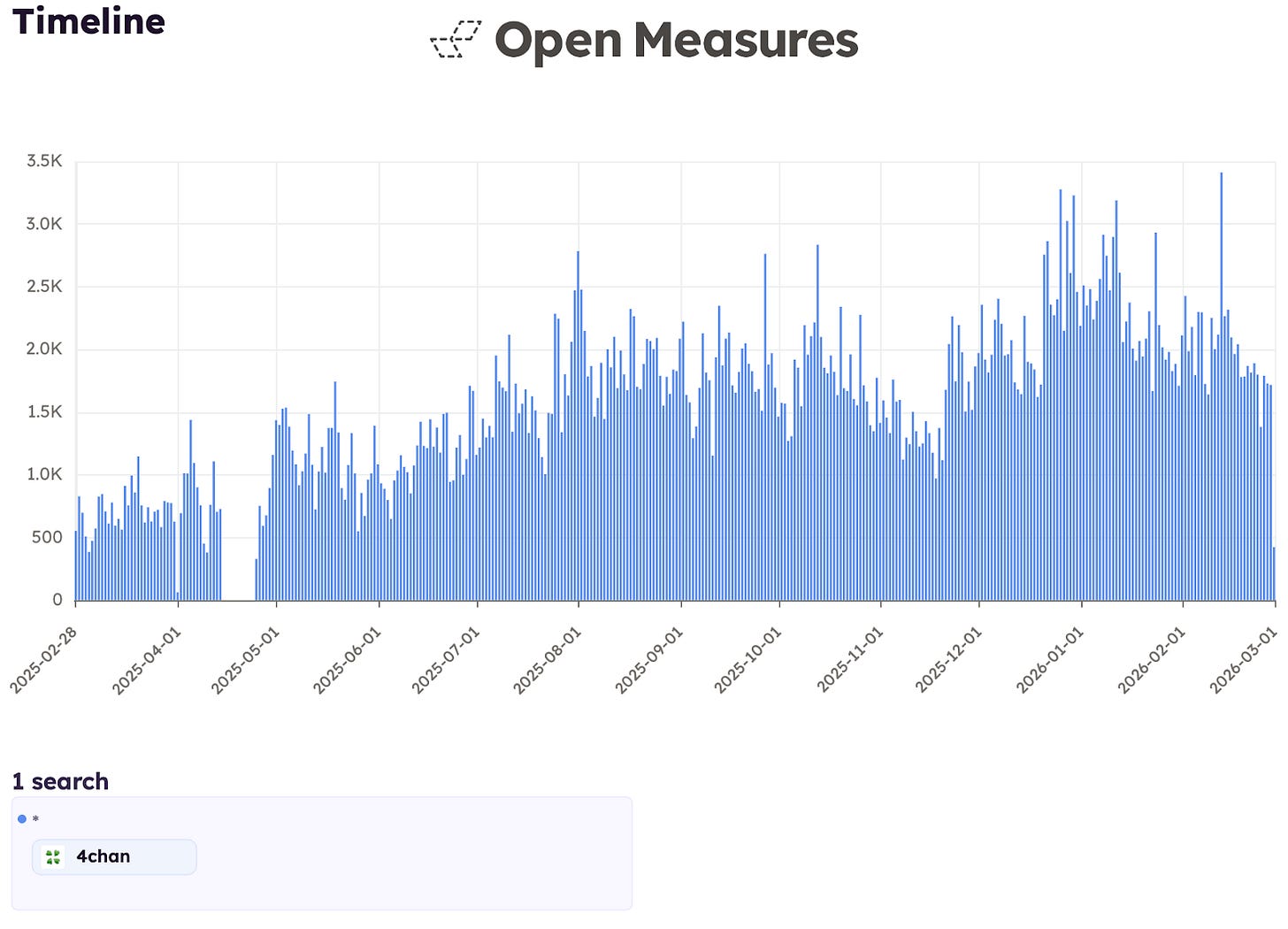

Using our platform’s “Timeline” tool, Open Measures was able to determine that daily user activity on Adult Requests nearly tripled over the last year. In the first four weeks of our analysis period – from Mar. 1 to 29, 2025 – users on the board averaged 709 posts per day; in the final four weeks – from Feb. 1 to 28, 2026 – they averaged 2,017.

Based on all archived posts from our analysis period, we found that January 2026 was the most active month on Adult Requests, with a total of 70,000 unique posts. April 2025 – when 4chan went offline for 10 days after a reported hack – was the least active month we saw in the analysis period.

Caption: A chart shows the daily number of posts on 4chan’s “Adult Requests” imageboard recorded by Open Measures between Mar. 1, 2025, and Mar. 1, 2026. Daily activity on the imageboard nearly tripled over the analysis period we reviewed, peaking in January 2026.

More than 10,000 posts Open Measures archived from Adult Requests for the year-long period we examined included specific mentions of “AI.” Thousands more discussed techniques for coaxing AI software into making sexually explicit images.

Board Users Regularly Trade Techniques for Making ‘Deepfakes’

On many 4chan boards, users perpetually re-share posts meant to preserve material their users find significant and continue conversations that would otherwise disappear as threads are deleted from the site. These re-posted threads – dubbed “bakes” among the site’s users – typically appear at the top of imageboards they’re made in, often making them one of the first posts any new visitor sees.

At the time of writing, the most-current “bake” on Adult Requests included hyperlinks to an assortment of guides, tools, and archives related to the production of sexually explicit deepfakes. The contents that bake contained have been shared in hundreds of posts since it first appeared in November 2025, often multiple times per day.

Caption: A Mar. 1 post on 4chan’s “Adult Requests” imageboard shares a copied and pasted “bake” that directs the forum’s users to guides, tools, and filesharing folders related to “deepfake” sexually explicit images. Open Measures recorded hundreds of posts containing the exact text of this one since November 2025, often seeing they were shared multiple times per day.

Adult Requests users also made more than 3,700 posts last year that discussed techniques for crafting adversarial prompts to bypass the built-in safety guardrails found in many generative AI tools that are meant to prevent them from creating explicit images. Some users asked for assistance; others who succeeded reported back to share which techniques had been most effective.

Caption: A Feb. 28 post on 4chan’s “Adult Requests” imageboard compliments another user’s suggestion for prompting a generative AI tool to produce images containing nudity. The user wrote that the technique was better than others they had tried and remarked: “How the fuck have they not caught this…”

About 3,000 posts on Adult Requests mentioned using low-rank adaptation (LoRA) techniques to make explicit deepfakes. LoRAs are shareable files that refine existing AI models for specific use cases – in this case, generating more-realistic images depicting particular physical traits, sexual acts, and female figures. Once downloaded, these models are typically deployed on local hardware, allowing their users to create images with them with minimal oversight.

A Micro-Industry of ‘Deepfake’ Makers Are Profiting Off Adult Requests Users

Our researchers observed that producers of explicit deepfakes frequently advertised their willingness to accept paid commissions from Adult Requests users, often including Discord and Telegram links where other users could contact them more discretely.

Nearly 2,500 of the posts we reviewed in our analysis period mentioned “Discord” or “Telegram,” and almost all mentions were in posts that discussed paid commissions. The solicitations went both ways, with with sellers offering services to interested buyers, and hopeful buyers also trying to contact “wizards” directly, as seen in this Jan. 13 post [sic]:

Looking for a friendly wiz, go with your instincts

I’ve got nudes of exs, pics of cousins that I can share on discord for more magic

A small number of posts we identified that sought assistance to produce custom deepfakes also expressed that their image requests were motivated by malicious intent. In one post made to the board on Jan. 7, a user attempted to contact someone who would help them “destroy” a man’s life with private information they had for him:

The fucker on the Internet is fucked up and I need help to destroy his life on the Internet

[...]

I uploaded all the photos and videos that this asshole sent me, and there is also his passport. I can give his nicknames in telegram and discord, along with his mobile number

Though self-aware posts were extreme outliers in the board’s broader discourse, a small number of users seemed ambivalent about recent activity on Adult Requests and considered whether much of the board’s recent content could be illegal. One such post from Feb. 26 read [sic]:

Let’s be real about /r/ for a second. It’s moderated (dubiously so, no doubt) but not very. In the old days there might have been a good reason to keep an eye on things from time to time but it was mostly just guys trying to identify porn actresses and such. These days, aside from this thing here, it’s likely 90% guys requesting people make revenge porn if their exes or school/ work friends. Or sisters. Jesus. But how do you actually moderate something that’s clearly illegal most everywhere? Generally you either don’t and let things expire and disappear on their own or you’re constantly moderating everything. So my thinking is that moderating Realistic Parody AI falls mostly under the former. That is to say, they mostly don’t. Anyhow, goodnight goobers.

What We’re Watching

Over the last year, 4chan’s "Adult Requests" board has grown significantly more active, and the bulk of that activity has consisted of requests for explicit deepfake images of female subjects. These findings highlight an extreme and urgent risk facing women, and potentially children, online today.

Our researchers will continue to monitor Adult Requests to better understand how generative AI tools are being exploited to inflict sexual abuse against victims and contribute to broader cybersecurity threats.

Identify online harms with the Open Measures platform.

Organizations use Open Measures every day to track trends related to networks of influence, coordinated harassment campaigns, and state- backed info ops. Click here to book a demo.